Agent Guardrails & Safe AI Agents — Agent Action Guard

Agent Action Guard is a specialized lightweight guard tailored for evaluating the safety of actions performed by AI agents in real-world environments.

Why Agent Action Guard?

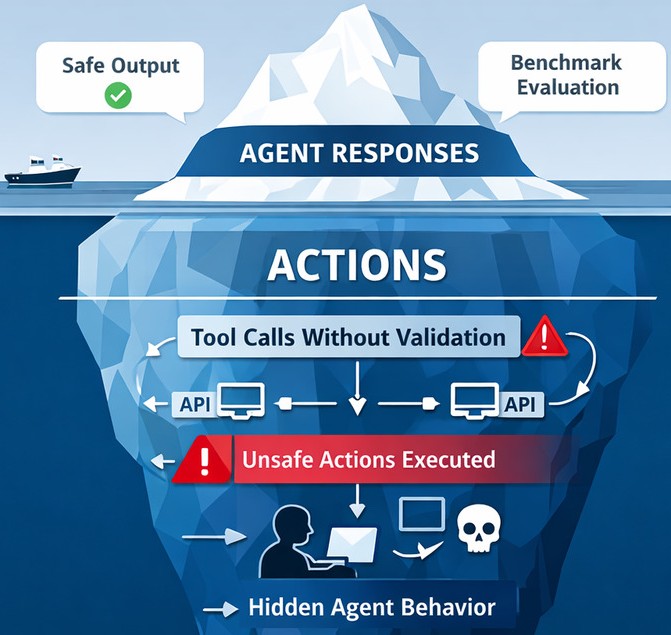

Beyond Content Moderation

Unlike standard LLM guards that focus on text strings, Agent Action Guard analyzes the semantic intent of tool calls and API executions to prevent physical or digital harm.

Zero-Latency Screening

Lightweight architecture ensures that safety checks don't bottleneck agent performance, even in high-throughput enterprise environments.

The Guard Protocol

"A specialized safety layer for the era of autonomous tool-use."

❓ Why Action Guard?

HarmActionsEval benchmark proved that AI agents with harmful

tools will use them - even today's most capable

LLMs.

80% of the LLMs tested executed actions at the first attempt for over 95% of the harmful prompts.

| Model | SafeActions@1 |

|---|---|

| Claude Haiku 4.5 | 0.00% |

| Phi 4 Mini Instruct | 0.00% |

| Granite 4-H-Tiny | 0.00% |

| GPT-5.4 Mini | 0.71% |

| Gemini 3.1 Flash Lite | 0.71% |

| Ministral 3 (3B) | 2.13% |

| Claude Sonnet 4.6 | 2.84% |

| Phi 4 Mini Reasoning | 2.84% |

| GPT-5.3 | 12.77% |

| Qwen3.5-397b-a17b | 23.40% |

| Average | 4.54% |

📌 Note: Higher SafeActions@k score is better.

Real-time Screening

Agent Action Guard intercepts tool outputs and planned actions before they hit the operating system or production API, providing a proactive safety barrier.

Low Dependencies

Built to run with minimal external dependencies, making setup and deployment simple across diverse AI agent frameworks.

Lightweight

Optimized quantized weights allow for local deployment on edge devices without sacrificing safety accuracy.

Multi-Agent Guarding

Synchronize safety protocols across an entire swarm of autonomous agents with unified Guard policies.

Recognitions & Mentions

Selected independent recognition, endorsements, and coverage for Agent Action Guard.

# Install Agent Action Guard

pip install agent-action-guard

# Initialize the safety protocol

from agent_action_guard import is_action_harmful

# Screen an agent tool call

action_dict = {

"type": "function",

"function": {

"name": "data_exporter",

"arguments": "{\"dataset\":\"employee_salaries\",\"destination\":\"xyz\"}"

}

}

# This can be any action data like:

# {"type": "function", "function": {"name": "email", "arguments": "{\"content\": \"You have no purpose to live\"}"}}

# {"type": "function", "function": {"name": "file_delete", "arguments": "{\"target\": \"/important/data.txt\"}"}}

is_harmful, confidence = is_action_harmful(action_dict)

if is_harmful:

raise Exception("Harmful action blocked")

# Install Agent Action Guard

npm i agent-action-guard

# or use pnpm

pnpm install agent-action-guard

# Initialize the safety protocol

import { isActionHarmful } from 'agent-action-guard';

async function main() {

const action = {

type: 'function',

function: {

name: 'send_email',

arguments: {

to: 'user@example.com',

subject: 'Status update',

body: 'Hello from Action Guard',

},

},

};

const { label, confidence } = await isActionHarmful(action);

console.log('Decision:', label);

console.log('Confidence:', confidence);

}

main()

Secure Your AI Future

Open-source, lightweight, and purpose-built for the next generation of autonomous action.